Weekly Streak Lab

WeeklySmall, consistent weekly builds to keep my data engineering skills sharp—clean → model → validate → ship.

Business Intelligence Engineer · Seattle

I build analytics products that turn messy, real-world data into clear decisions—dashboards people trust, and pipelines that don’t break when things change.

A quick snapshot of how I think, what I enjoy, and why I’m in this work.

I started in operations and bookkeeping, where accuracy, communication, and clean records were essential. That foundation pushed me into analytics through Year Up and then Amazon, first as a Business Analyst Intern and now as a Business Intelligence Engineer.

As highlighted in my resume, my day-to-day work focuses on translating business questions into trusted metrics, decision-ready reporting, and scalable data workflows. I partner across teams to improve data quality, standardize KPI definitions, and deliver analytics that help leaders make faster, higher-confidence decisions.

Outside of work, I build fun personal projects to learn new skills and simplify real workflows for people, teams, and developers.

Rocky Mountains

Rocky Mountains

Guitar

Guitar

Snek study

Snek study

Amazon

Seattle, WA, USA

Full-time · 2024 — Present

Internship · 2023 — 2024

Part-time · 2022 — 2023

Palma Trucking

Seattle, WA, USA

Full-time · 2022 — 2023

Western Governors University

Salt Lake City, UT, USA

Degree Program · 2025 — Expected 2028

Seattle Central College

Seattle, WA, USA

Technical Training · 2023 — 2024

What I use confidently today and what I am actively learning next.

Real builds that show how I think. Each one includes what I build, what I learned, and what I’d do next.

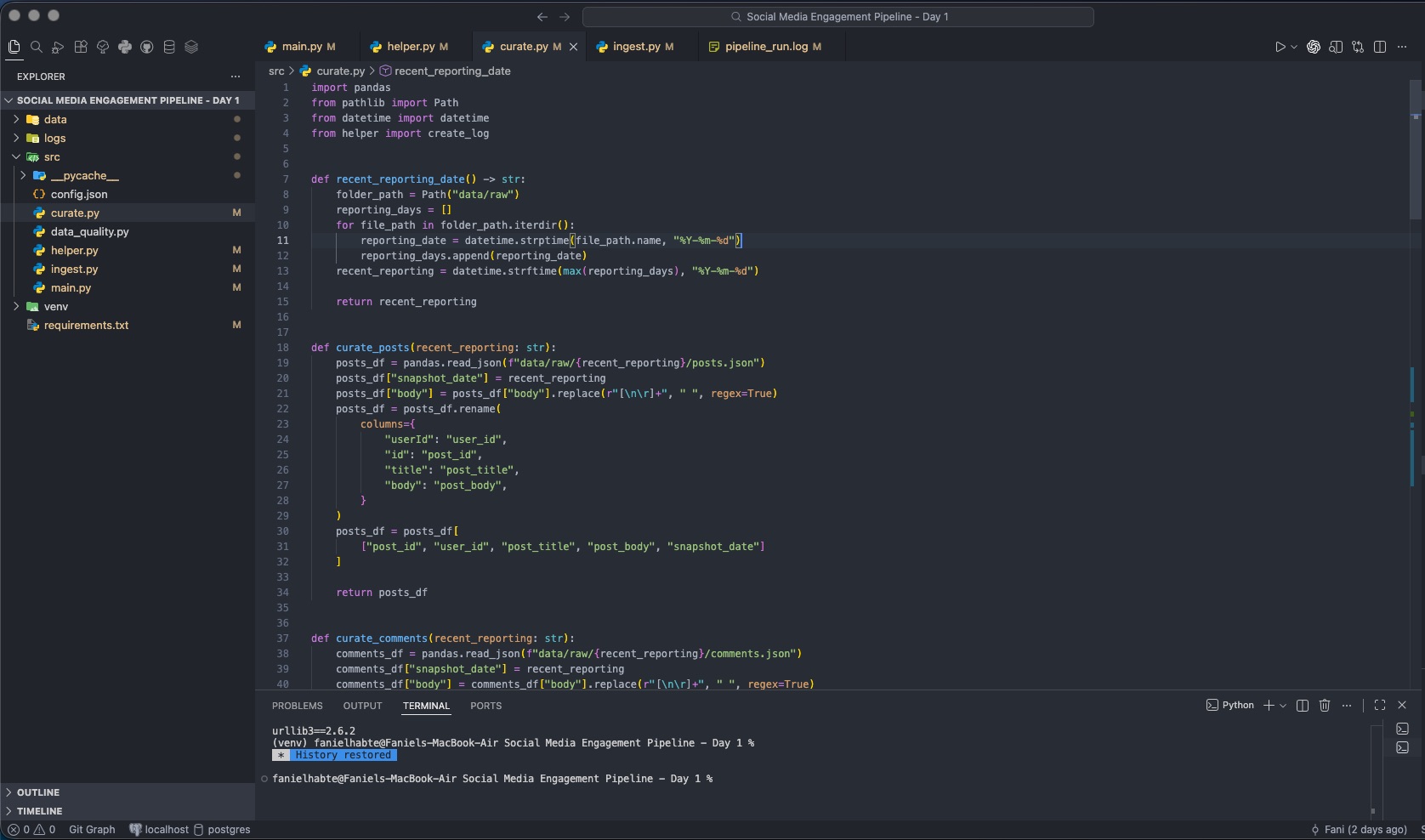

Small, consistent weekly builds to keep my data engineering skills sharp—clean → model → validate → ship.

A reproducible pipeline that turns raw geopolitical indicators into curated tables and narrative insights for trend and driver analysis.

Tech stack

Learned skills

Build end-to-end energy reporting pipelines from EIA datasets, landing raw to curated and publishing analytics-ready tables with a simple app/dashboard layer.

Tech stack

Learned skills

A small-but-real pipeline that ingests JSON, cleans it, standardizes schema, and publishes curated tables for analysis.

A lightweight data workbench where users upload datasets, run SQL-style analysis, and turn saved logic into reusable dbt-ready workflows.

Tech stack

Learned skills

A research-driven developer tooling project that explores a SQL-like query language for REST APIs, translating custom syntax into API calls and returning analysis-ready tabular outputs.

A text-to-insights workflow that extracts sentiment and action items from feedback and returns structured JSON for downstream reporting.

A community-focused portal concept for managing member records, attendance, and contribution tracking with clear, role-based visibility.

Let’s connect — I respond fastest by email.

Open to thoughtful collaboration, feedback, and knowledge sharing across analytics, BI, and data engineering.